if they are not lithium its a vented to the outside box with a powered fan. Must be in a dedicated mechanical room, must have a smoke detector, must have a headroom of 6'9". Most of those apply to lithium as well except the venting and box.Allen Jackson wrote:Out of curiosity David, what if they aren't "lithium" batteries?

Allen Jackson wrote:

David Baillie wrote:Hi Jackie,

I do not see on the link anything about UL9540 compatibility or certification in Europe or North America.

I haven't seen anything about UL9540 compatibility either, so I started digging...

For the most part, it doesn't matter, and it's NOT applicable!

Let me explain. UL 9540 is the standard, to which energy storage systems are tested.

UL 9540A is the testing method of those systems to determine & evaluate their capacity for thermal runaway in a fire.

(This is used to determine how close they can be to each other, and most of the requirements don't apply to single and 2-family residences)

UL 9540B was developed to specifically address residential energy storage systems.

While UL 9540 is NOT just for lithium ion batteries, it really doesn't have much to say about LiFePO4 batteries. Because it's primarily focused on fire hazards and fire safety, it covers lead acid batteries, which can generate explosive hydrogen gas, and lithium ion batteries, which can be driven into thermal runaway by damage or heat. Since lithium iron phosphate batteries don't exhibit those characteristics, they already inherently pass the basic cell-level test requirements of UL 9540A (or UL 9540B), and per the testing norms, if the cells cannot be driven into thermal runaway, there's no further need to test the module or system that they are installed in.

UL 9540B was driven primarily by California fire code, that became more strict than UL 9540, to focus on the gaps in coverage of residential systems (pretty much due to the rise in popularity of Tesla Powerwalls and Tesla vehicles), because they DO use lithium ion batteries, and are most commonly found in residential settings.

Unless you're planning to install a DIY lithium ion based system the applicable regulatory agencies aren't likely to be paying much attention to your systems, unless there's an outside chance you're looking at a large scale installation of lead acid batteries, because of the hydrogen generation).

The fervor over insurance companies and inspectors not liking LiFePO4 battery systems, is only possible if they are ignorant of the differences between lithium ion and lithium iron phosphate batteries. It's even likely that inspectors will see the word "lithium" and automatically assume that a system is equivalent to a Tesla powerwall. That doesn't mean it can be set on fire, though...

Allen Jackson wrote:There has been a bit of talk in this thread about essentially the fear of what happens if the BMS in a lithium battery dies, and if those batteries are a commercial pre-built system, I suppose those concerns are real factors in the decision process. If you build a battery from parts, it's really not an issue, because you can still use the cheapest parts around, but if/when they fail, you can still replace them, you can upgrade them to less cheap parts, or you can replace them with the best quality parts available, now that you have experienced the functionality the battery system has brought to your life.

I'm not a big fan of buying commercial battery systems any more than I'm not a fan of buying a pre-built computer for a specialized use. "If you want a 'gaming' computer, build it to meet the junction of your needs and your budget, don't buy into the marketing of what some sale/marketing team decided would be flashy enough to bump their profit margins...

LiFePO4 batteries have become a commodity item, that's too easy to duplicate with readily available components, that it's hard to justify the extra expense of buying the package, unless you really wanted/needed the convenience of buying it and plugging it in, then quickly moving on to something else. For the same reasons I'd avoid the pre-built 'gaming' computers, manufacturers of battery systems are still for-profit businesses, that will cut corners to appeal to the middle of the bell curve, since that's where the profit is. If you happened to want or need anything just outside of that, you end up compromising your needs, or wasting time/money to do it over just to get it right. DIY doesn't mean that you don't have to do anything over, it just increases the speed with which you can, plus it drops the cost of doing so.

Case in point, last week, I built a LiFePO4 battery that I was & am very happy with, but I wasn't happy with the BMS or the "brains" of the battery. Yesterday, I replaced the old BMS with a much better matched one for my needs and it pretty much just involved using a screwdriver to swap the connections.

Jackie Lei wrote:Most people talk about capacity, but the bigger difference is how the system behaves day to day.

With lead acid, once you get past ~80% it barely takes charge, so a lot of solar ends up wasted. LiFePO4 keeps accepting charge almost to the top, so you actually use more of what you generate.

It’s also not just a battery swap — a lot of issues people see (cold weather behavior, charging logic, weird cutoffs) are more about system setup than the chemistry itself. For example, setups like this 48V LiFePO4 system are basically designed around that kind of full-solar usage: https://cmxbattery.com/product/48kwh-lifepo4-solar-battery-51-2v-942ah-300a-bms-home-backup/

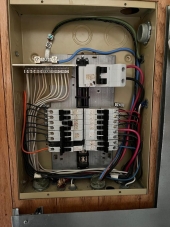

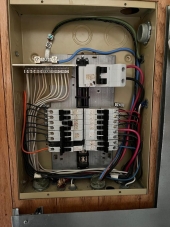

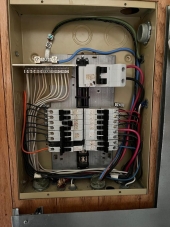

8 gauge copper is 40 amps. Ned is absolutely right you will need an Ac disconnect outside.Jon Bee wrote:

Yup I think that is code too in Ontario. Will get that 60A breaker done too in the solar shed next to inverter. Then leave the 100A in the new (or old) panel.Ned Harr wrote:

Jon Bee wrote:So, if I do the new panel, do I still use a 100 Amp main breaker in the new panel if I get the 60 Amp breaker installed on the output of the inverter? Seems like the 100 Amp main breaker really does nothing at that point. Or I could put the 60 Amp breaker in the new panel as the main breaker and not do the one at the inverter.

First means of disconnect should be outside the cabin, not in your main panel which you’ve said is in the kitchen/will be in a utility room. Whether you keep a main breaker in the panel might be a convenience or budgetary choice, or something to decide based on off-grid expertise that I personally don’t have, but I do think your first means of disconnect needs to be outside, at least if the latest codes up there are anything like they are down here in the US.

Last piece of the puzzle - figure out what gauge wire is on the 50' run from inverter in solar shed to AC panel in cabin. I think (hope) it's #6. Might be #8 so may have to go with 50A breaker. Can't get out there to confirm on that. Still too much snow on the 5Km access road.

Ok one more panel question. Sorry this has snowballed. If I change out the panel, do I need to buy AFCI (Arc Fault) breakers for all bedrooms and living areas as per the code now or can I just buy new standard 15A breakers to match what was in my old panel. Or will the ESA Inspector fail that?

Does your inverter have an over current breaker for AC out? If not install a 60 amp disconnect near the inverter and leave the 100 amp panel in place and add circuits to it. if you pull the permit as a homeowner in ontario and the ESA inspects it and approves it there is legally no difference than if an electrician did it. For resale and relationship peace of mind I would pull the permit. I've never had grievances with the ESA guys and have had them flag some major boo boos along the way.Jon Bee wrote:

Ned Harr wrote:If you can do it yourself, a panel change is not difficult, especially in a small cabin with hopefully not a ton of circuits. The cost then is ~the price of the panel & breakers plus a day of work in the dark (by flashlight usually). If you have some basic tools and some knowledge of how to wire a panel it’s pretty easy, actually kinda relaxing and fun.

Of course, if you don’t know how to do it and don’t feel confident you could learn, then don’t, as it could be dangerous/hazardous.

Twenty five years ago I had a cabin built and I wired the whole thing plus installed the panel and mast myself. Manitoba Hydro inspected it and passed it. Sold that place two summers ago and got this new cabin we are in near Kenora. With this new (40 year old) cabin, I had an addition built last fall and I wired all of the new circuits for it. Now I figured I'd get an Electrician to get a permit and do the panel replacement and get my wiring inspected at the same time. I'm going that route to make sure I never have any issues with the insurance company (and piece of mind - mostly for my wife). But I also don't want to have an inspector looking at any existing wiring (not that I anticipate problems).

So I either leave the old panel and just add breakers for my new circuits and not worry about the 100 Amp breaker being over-sized or go the other route with permit/electrician/new panel with 60 Amp breaker. Or the third option, do the panel myself (and tell my wife an electrician did it).

It's the breaker at the inverter that needs to be sized correctly not the one in the panel. Leave the 100 amp in place and make sure the inverter breaker is small enough to trip at less than 80 percent of 60 amps (6 gauge wire) or the inverter cannot put out more than that. You have to have a sized breaker but the ESA is looking for it at source not at destination. That is based on all the installations I have ever done in Ontario.Jon Bee wrote:I am totally off grid. With my current old system the inverter feeds the main 100 Amp breaker in the cabin panel via #6 conductors in conduit. I am upgrading my solar system and my new 6000W Inverter will limit output current to 42A continuous (–90A surge). I know the 100 Amp breaker in my panel is oversized. My panel is a Federal Pioneer (Canadian - not the same as the US version with issues) the 100 Amp main breaker cannot be swapped out.

I would still like to get the breaker in the panel to the correct size which I believe should be a 60 Amp breaker but would need to do a panel upgrade to go to either a 60 Amp main breaker or a 60 Amp backfeed breaker. The new 100 Amp panels allow you to swap out the main breaker so could put a 60 Amp there.

Wondering how others off grid have their panel and breakers wired?

John Weiland wrote:The impact here with our rural coop in Minnesota is not so much the rate of pay-back for excess power generated. It's that connection fees (billed monthly) are beginning to creep up at a worrisome rate. I'm not sure what their rate for adding grid-intertie to the mix is just now, but even without it, our current connection fee is over $60 per month, even if we were to not draw any power from them during that month. I understand that such fees are necessary to maintain the grid for all, but will have to weigh this as a factor in the homestead economics. What I certainly do like and appreciate is the greater affordability, on-site power storability, generation efficiency, and plug-n-play nature of modern solar offerings. It comes with a re-education for the homeowner to be sure, but the potential for a bit more power independence is sizable.

One question for you in Canada without getting lost in the weeds too much of particulars: Does Canada as a country or the Provinces (or cities/counties) provide financial incentives for solar/alternative power installation by homeowners? A useful swath our tax incentives in the US have disappeared under the current government....could well come back after changes occur in that realm...but i'm curious as to whether or not home power generation up there is seen somewhat in the same light as health care, where the costs of the service are distributed across residents in a different way between the two nations. Thanks....and appreciative of these discussion points from both of you...for solar especially, so nice to hear how those in northern climes are balancing the summer power generation with winter deficits and working out affordable plans with grid power providers.